Innovation

The magic touch: bringing sensory feedback to brain-controlled prosthetics

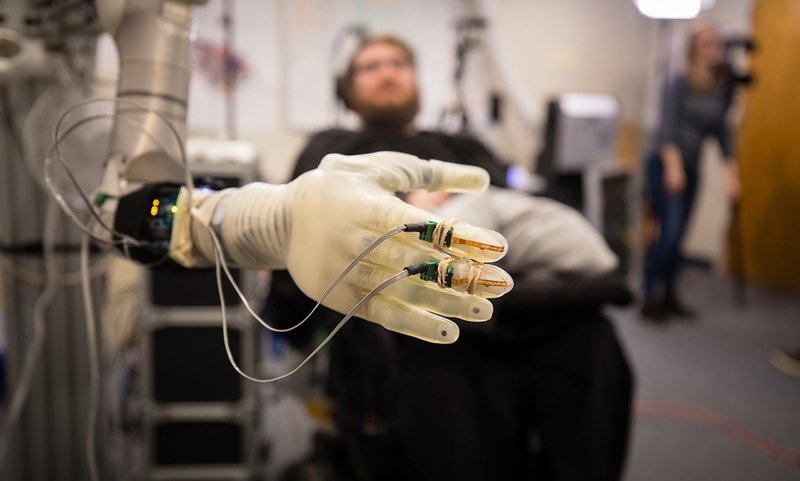

Researchers at the University of Chicago are leading a project to introduce the sense of touch to the latest brain-controlled prosthetic arms. Adding sensory feedback to already-complex neuroprosthetics is a towering task, but offers the chance to radically transform the lives of amputees and people living with paralysis. Chris Lo reports.

Image courtesy of Prensilia

F

or centuries prosthetics have been limited to basic attachments replacing missing limbs or extremities, but in the last 20 years, artificial limbs have moved forward at an electric pace.

Today’s technologies incorporate more advanced ranges of movement and, with the advent of neuroprosthetics, researchers have brought about the rise of sophisticated brain-controlled prosthetic limbs. These are combined with electrode arrays – placed in the brain, nerves or muscles – to decode the messages between the brain and the limb that control movement, allowing users’ brains to power basic movement in, say, a prosthetic arm.

As University of Chicago (UChicago) associate professor and neuroprosthetics researcher Dr Sliman Bensmaia notes, brain-controlled prosthetics have become a vibrant field of research.

“The idea is to put electrodes in [the motor cortex] so when [a tetraplegic patient] tries to move their arm, or imagines moving their arm, there is a characteristic pattern of activation in this motor part of the brain,” he says. “We can take the signals from this part of the brain and infer what the patient or the subject wanted to do, and then you make the robotic arm do that. There’s a cottage industry of developing different ways of doing that, and they’re all slight variants of one another.”

Neuroprosthetics have unlocked advances that amputees and paralysed patients previously wouldn’t have dreamed of, but as with any rapidly emerging area of research, there’s still a long way to go before the field meets its full potential. One of the major limiting factors for the dexterity of neuroprosthetics relates to a sense that most humans take for granted: touch.

“When you grasp an object, you have all this information about the object – its size, its shape, its texture, if it’s moving across the skin,” Bensmaia says. “It turns out that these sensory signals coming back from the hand are critical to our ability to use the hand. Even if your motor system was completely intact, if you don’t have the sense of touch, your hands become useless. That was a realisation that came about after they had made the robotic arms, made the decoding algorithms for the motor cortex. And now we really need to take the sensory stuff seriously, or these things will never have any kind of dexterity. So that’s where I came in.”

Brain-controlled prosthetics have become a vibrant field of research.

Image courtesy of Pitt-UPMC

Sensory feedback: the next frontier in neural prosthetics

Bensmaia and his lab, as well as other UChicago colleagues, is collaborating with the University of Pittsburgh and the University of Pittsburgh Medical Center to advance the understanding and implementation of prosthetics controlled via brain-computer interfaces. The UChicago research team’s portion of the project, which was recently awarded a $3.4m grant from the National Institutes of Health, covers the somatosensory aspects – the system that transmits sensory information from the body’s nerve fibres to the brain’s somatosensory cortex, which sits next to the movement-oriented motor cortex.

“What I’ve spent most of my time in my lab doing is trying to understand how is it that the nervous system encodes this information about objects we touch,” Bensmaia says. “What we’re trying to do in these neural prosthetics applications is leverage what we’ve learned about how the intact nervous system does that and try to reproduce that by electrically stimulating different structures.”

It feels like my fingers are getting touched or pushed.

For patients who have lost an arm and are living with a prosthetic, the team’s method would be to electrically stimulate the nerve with electrodes, while for tetraplegic patients, the electrode array would be implanted in the somatosensory cortex. In either case, sensors on a bionic hand – disembodied, in the case of tetraplegic users – generate information that complex algorithms can convert into patterns of electrical stimulation to the nerve or brain in real-time to convey sensory feedback or elicit sensations.

“We developed a model last year, which was published in PNAS [Proceedings of the National Academy of Sciences of the United States of America], that allows us to reproduce with striking precision how the nerve would respond to any kind of object interacted with,” Bensmaia says.

Indeed, in 2016 Bensmaia and colleagues from Chicago and Pittsburgh demonstrated a version of the system in a 28-year-old tetraplegic subject, Nathan Copeland, who was able to distinguish touches on different fingers of a robotic arm based on the stimulated sensations he felt in his own non-functional hand. “Sometimes it feels electrical and sometimes it’s pressure, but for the most part I can tell most of the fingers with definite precision,” said Copeland in 2016. “It feels like my fingers are getting touched or pushed.”

“Without thinking, he can tell which part of the prosthetic hand is touching the object. That’s super-useful,” Bensmaia says. “The more pressure he exerts on an object, the stronger the evoked sensation is. So he has a pretty good idea of where he’s touching the object and how much force he’s exerting on it.”

The language of touch: questions to answer

The progress on sensory feedback is encouraging but limited, and there are a host of questions for Bensmaia and other researchers to answer before the true technological revolution can begin. In terms of the nerve, Bensmaia says the challenge is a purely technological bottleneck.

“If we had perfect technology that allowed us to individually stimulate each nerve fibre selectively, we could restore the sense of touch perfectly. But of course, the technology doesn’t allow us to do that. You have this array with up to a couple of hundred electrodes, and with this relatively small channel count, we’re trying to stimulate 10,000-15,000 fibres, which each have their own idiosyncratic response in an intact organism.

“We’re not going to be able to do each of these 12,000 or 15,000 fibres individually. What we’re able to stimulate is tens or hundreds of them at a time. How do tens or hundreds of fibres respond? That we can use our model to figure out, then we use the electrical stimulus to try and reproduce that, or evoke that to the extent that we can.”

The brain, of course, is considerably more complex. Understanding of how the brain operates is improving every year, but gaps in knowledge are still present and need to be unpicked to improve the decoding algorithms that translate the brain’s language of touch. Given that the hand and fingers primarily used to exert force rather than move in a space, leveraging sensory feedback to improve dexterity will be a knotty problem to unravel. The naturalism of evoked sensation will need to be refined, too.

The main challenge is to create implants that are going to last forever.

“I don’t think we’re going to be able to tell the difference between good silk and cheap silk with a prosthetic hand anytime soon,” says Bensmaia. “But being able to, for instance, convey more precisely exactly when contact is established with an object, about how much force is exerted on it, in a much more realistic and naturalistic way are our more short-term goals.”

As well as advances in wireless and making the most sophisticated bionic hands rugged enough to serve outside of a lab environment, improvements to the underlying brain-computer interface that powers brain-controlled movement for tetraplegics will also be necessary. The most important leap, Bensmaia contends, will be on the biocompatibility and physical flexibility of the implanted electrode arrays.

“These neural implants, you’re basically sticking a bed of nails in the brain – and the brain isn’t happy,” he says. “It fights the device, and long story short, these devices don’t last long enough. They last a few years, and you don’t want to be doing a craniotomy every few years to put a fresh one in. So I think the main challenge is to create implants that are going to last forever.”

President Obama fist-bumps the robotic arm of Nathan Copeland during a tour at the White House Frontiers Conference at the University of Pittsburgh. Image courtesy of Pete Souza/ The White House

A revolution on the horizon

Bensmaia and colleagues at UChicago are now gearing up for more human trials of the sensory feedback system. UChicago Medicine neurosurgeon Peter Warnke has been brought onboard to implant the devices, and Bensmaia says the team is “actively searching for subjects”, although a small pool of eligible patients and the early-stage nature of the research could make this process slow going.

Looking to the future, Bensmaia is hopeful that more sophisticated prosthetic devices are on the way, especially with greater private sector involvement, as seen with Elon Musk’s brain-computer interface firm Neuralink and Deka’s impressive LUKE Arm.

Amputees who now have bionic hands that they can feel through, that almost makes them feel whole again.

“Scientists are just interested in science,” Bensmaia says. “Once they’ve made a device sort of work, they get bored with it and work on something else. Whereas in the private sector, people invest to make money and therefore they really want to bring it to market. So that’s promising.”

It’s the beginning of a long and winding road towards brain-controlled prosthetic hands that can leverage sensory feedback to approximate the hand’s natural dexterity. But the unmet needs of amputees, and tetraplegics especially, are many, and restoring a sense of touch and movement to those who have had to live without them is a powerful motivating force.

“If you talk to amputees who now have bionic hands that they can feel through, that almost makes them feel whole again,” says Bensmaia. “Because they now feel the bionic hand, they sometimes describe the bionic hand as ‘their’ hand; it’s endowed with a sense of touch, and when they see the hand touching something they can feel it.”